Well, the information about which kernel version we’re dealing with Rock 5B is here multiple times in this thread (part of sbc-bench output for example). But it’s important to understand how this kernel got to this version since as already said: it’s forward ported from 2.6.32 on and not the official 5.10 LTS kernel.

ROCK 5B Debug Party Invitation

1st quick check for networking. It’s a RTL8125BG connected via PCIe Gen2 x1:

LnkCap: Port #0, Speed 5GT/s, Width x1, ASPM L0s L1, Exit Latency L0s unlimited, L1 <64us

ClockPM+ Surprise- LLActRep- BwNot- ASPMOptComp+

LnkCtl: ASPM L1 Enabled; RCB 64 bytes Disabled- CommClk+

ExtSynch- ClockPM+ AutWidDis- BWInt- AutBWInt-

LnkSta: Speed 5GT/s (ok), Width x1 (ok)

TrErr- Train- SlotClk+ DLActive- BWMgmt- ABWMgmt-

In TX direction everything is fine even when letting iperf3 run on a little core:

root@rock-5b:/home/rock# taskset -c 5 iperf3 -c 192.168.83.63

Connecting to host 192.168.83.63, port 5201

[ 5] local 192.168.83.113 port 47964 connected to 192.168.83.63 port 5201

[ ID] Interval Transfer Bitrate Retr Cwnd

[ 5] 0.00-1.00 sec 272 MBytes 2.28 Gbits/sec 41 573 KBytes

[ 5] 1.00-2.00 sec 281 MBytes 2.36 Gbits/sec 0 701 KBytes

[ 5] 2.00-3.00 sec 281 MBytes 2.36 Gbits/sec 0 728 KBytes

[ 5] 3.00-4.00 sec 280 MBytes 2.35 Gbits/sec 0 735 KBytes

[ 5] 4.00-5.00 sec 281 MBytes 2.36 Gbits/sec 0 738 KBytes

[ 5] 5.00-6.00 sec 280 MBytes 2.35 Gbits/sec 0 741 KBytes

[ 5] 6.00-7.00 sec 280 MBytes 2.35 Gbits/sec 0 745 KBytes

[ 5] 7.00-8.00 sec 281 MBytes 2.36 Gbits/sec 0 748 KBytes

[ 5] 8.00-9.00 sec 280 MBytes 2.35 Gbits/sec 0 749 KBytes

[ 5] 9.00-10.00 sec 281 MBytes 2.36 Gbits/sec 0 749 KBytes

- - - - - - - - - - - - - - - - - - - - - - - - -

[ ID] Interval Transfer Bitrate Retr

[ 5] 0.00-10.00 sec 2.73 GBytes 2.35 Gbits/sec 41 sender

[ 5] 0.00-10.00 sec 2.73 GBytes 2.35 Gbits/sec receiver

iperf Done.

root@rock-5b:/home/rock# taskset -c 1 iperf3 -c 192.168.83.63

Connecting to host 192.168.83.63, port 5201

[ 5] local 192.168.83.113 port 47968 connected to 192.168.83.63 port 5201

[ ID] Interval Transfer Bitrate Retr Cwnd

[ 5] 0.00-1.00 sec 227 MBytes 1.90 Gbits/sec 244 479 KBytes

[ 5] 1.00-2.00 sec 282 MBytes 2.37 Gbits/sec 0 689 KBytes

[ 5] 2.00-3.00 sec 280 MBytes 2.35 Gbits/sec 0 730 KBytes

[ 5] 3.00-4.00 sec 280 MBytes 2.35 Gbits/sec 0 744 KBytes

[ 5] 4.00-5.00 sec 281 MBytes 2.36 Gbits/sec 0 749 KBytes

[ 5] 5.00-6.00 sec 280 MBytes 2.35 Gbits/sec 0 755 KBytes

[ 5] 6.00-7.00 sec 281 MBytes 2.36 Gbits/sec 0 759 KBytes

[ 5] 7.00-8.00 sec 280 MBytes 2.35 Gbits/sec 0 762 KBytes

[ 5] 8.00-9.00 sec 281 MBytes 2.36 Gbits/sec 0 765 KBytes

[ 5] 9.00-10.00 sec 280 MBytes 2.35 Gbits/sec 0 768 KBytes

- - - - - - - - - - - - - - - - - - - - - - - - -

[ ID] Interval Transfer Bitrate Retr

[ 5] 0.00-10.00 sec 2.69 GBytes 2.31 Gbits/sec 244 sender

[ 5] 0.00-10.00 sec 2.69 GBytes 2.31 Gbits/sec receiver

iperf Done.

But in RX direction performance sucks with defaults. IRQs 133 and 149 are on little cores. And even when moving them to big cores I’m stuck at 510 Mbits/sec. Also the weird cpufreq behaviour (even with performance cpufreq governor clocking the cores as low as 408 MHz) doesn’t help.

This is some stuff for deeper investigations. But let’s check PCIe powermanagement first:

root@rock-5b:/home/rock# cat /sys/module/pcie_aspm/parameters/policy

default performance [powersave] powersupersave

Well, that doesn’t look right. Let’s switch to default et voilà:

Even on a little core 2.5GbE link saturated:

root@rock-5b:/home/rock# taskset -c 1 iperf3 -R -c 192.168.83.63

Connecting to host 192.168.83.63, port 5201

Reverse mode, remote host 192.168.83.63 is sending

[ 5] local 192.168.83.113 port 47980 connected to 192.168.83.63 port 5201

[ ID] Interval Transfer Bitrate

[ 5] 0.00-1.00 sec 272 MBytes 2.29 Gbits/sec

[ 5] 1.00-2.00 sec 280 MBytes 2.35 Gbits/sec

[ 5] 2.00-3.00 sec 281 MBytes 2.35 Gbits/sec

[ 5] 3.00-4.00 sec 281 MBytes 2.35 Gbits/sec

[ 5] 4.00-5.00 sec 280 MBytes 2.35 Gbits/sec

[ 5] 5.00-6.00 sec 279 MBytes 2.34 Gbits/sec

[ 5] 6.00-7.00 sec 280 MBytes 2.35 Gbits/sec

[ 5] 7.00-8.00 sec 280 MBytes 2.35 Gbits/sec

[ 5] 8.00-9.00 sec 281 MBytes 2.35 Gbits/sec

[ 5] 9.00-10.00 sec 280 MBytes 2.35 Gbits/sec

- - - - - - - - - - - - - - - - - - - - - - - - -

[ ID] Interval Transfer Bitrate

[ 5] 0.00-10.00 sec 2.73 GBytes 2.35 Gbits/sec sender

[ 5] 0.00-10.00 sec 2.73 GBytes 2.34 Gbits/sec receiver

iperf Done.Some experiments with USB. Connected two SSDs in UAS capable USB3 enclosures:

root@rock-5b:/home/rock# lsusb -t

/: Bus 08.Port 1: Dev 1, Class=root_hub, Driver=xhci-hcd/1p, 5000M

|__ Port 1: Dev 2, If 0, Class=Mass Storage, Driver=uas, 5000M

/: Bus 06.Port 1: Dev 1, Class=root_hub, Driver=xhci-hcd/1p, 5000M

|__ Port 1: Dev 2, If 0, Class=Mass Storage, Driver=uas, 5000M

root@rock-5b:/home/rock# lsusb

Bus 006 Device 002: ID 174c:55aa ASMedia Technology Inc. Name: ASM1051E SATA 6Gb/s bridge, ASM1053E SATA 6Gb/s bridge, ASM1153 SATA 3Gb/s bridge, ASM1153E SATA 6Gb/s bridge

Bus 008 Device 002: ID 152d:3562 JMicron Technology Corp. / JMicron USA Technology Corp. JMS567 SATA 6Gb/s bridge

The try to create an mdraid0 (to test for bottlenecks with the USB3 ports) seems to fail: dmesg output

I know, most readers will now recommend to ‘blacklist UAS’ but the symptoms are maybe just related to some underpowering. Don’t know yet. At least there’s room for improvements (appending coherent_pool=2M to extlinux.conf)

I think in newer kernels coherent_pool auto expands as needed and that setting is merely a start point but shouldn’t hurt though. Anyway I remember from somewhere it autoexpands but can not remember which version that was introduced.

Well, adjusting this (and providing one of the two USB3 enclosures with own power) fixed the issues.

But it seems every tweak I added over the years to Armbian (like coherent pool size or IRQ affinity and ondemand governor tweaks) needs to be applied to Radxa’s OS images too.

Testing the raid0 with a simple iozone -e -I -a -s 1000M -r 1024k -r 16384k -i 0 -i 1:

kB reclen write rewrite read reread

1024000 1024 258830 270261 341979 344249

1024000 16384 270022 271088 667757 679947

That was running on a little core and is total crap. Now on a big core (by prefixing the iozone call with taskset -c 7):

kB reclen write rewrite read reread

1024000 1024 475382 457597 374120 374863

1024000 16384 777913 771802 736511 734899

Still crappy but when checking with sbc-bench -m it’s obvious that CPU clockspeeds don’t ramp up quickly and to the max:

Time big.LITTLE load %cpu %sys %usr %nice %io %irq Temp

19:43:03: 408/1008MHz 0.35 3% 1% 0% 0% 1% 0% 32.4°C

19:43:08: 408/1008MHz 0.33 0% 0% 0% 0% 0% 0% 32.4°C

19:43:13: 816/1200MHz 0.38 13% 3% 0% 0% 8% 1% 32.4°C

19:43:18: 1416/1200MHz 0.43 13% 2% 0% 0% 10% 0% 32.4°C

19:43:23: 600/1008MHz 0.56 14% 4% 0% 0% 7% 1% 33.3°C

19:43:28: 408/1008MHz 0.51 1% 0% 0% 0% 0% 0% 32.4°C

One echo 1 > /sys/devices/system/cpu/cpufreq/policy6/ondemand/io_is_busy later we’re at

kB reclen write rewrite read reread

1024000 1024 604081 603160 505333 506815

1024000 16384 791865 789173 775160 778706

Still not perfect (less than 800 MB/sec) but at least the big core immediately switches to highest clockspeed:

Time big.LITTLE load %cpu %sys %usr %nice %io %irq Temp

19:50:44: 408/1008MHz 0.00 2% 0% 0% 0% 0% 0% 32.4°C

19:50:49: 2400/1800MHz 0.00 5% 1% 0% 0% 3% 0% 32.4°C

19:50:54: 2400/1800MHz 0.08 12% 1% 0% 0% 11% 0% 32.4°C

19:50:59: 2400/1800MHz 0.15 13% 2% 0% 0% 9% 0% 34.2°C

19:51:04: 408/1008MHz 0.14 2% 0% 0% 0% 1% 0% 32.4°C

But 790 MB/s are close to what can be achieved with a RAID0 over two USB3 SuperSpeed buses since each bus maxes out at slightly above 400 MB/s.

Moral of the story: if you’re shipping with ondemand cpufreq governor you need to take care about io_is_busy and friends.

After adding the following to /etc/rc.local even on a little core storage performance is ok-ish:

echo default >/sys/module/pcie_aspm/parameters/policy

for cpufreqpolicy in 0 4 6 ; do

echo 1 > /sys/devices/system/cpu/cpufreq/policy${cpufreqpolicy}/ondemand/io_is_busy

echo 25 > /sys/devices/system/cpu/cpufreq/policy${cpufreqpolicy}/ondemand/up_threshold

echo 10 > /sys/devices/system/cpu/cpufreq/policy${cpufreqpolicy}/ondemand/sampling_down_factor

echo 200000 > /sys/devices/system/cpu/cpufreq/policy${cpufreqpolicy}/ondemand/sampling_rate

done

This is the result tested with taskset -c 1 iozone -e -I -a -s 1000M -r 1024k -r 16384k -i 0 -i 1:

kB reclen write rewrite read reread

1024000 1024 524857 526483 458726 459194

1024000 16384 780470 774856 733638 734297One reboot later with the JMS567 enclosure solely powered by the board we’re back in trouble land:

[ 126.480465] sd 1:0:0:0: [sdb] tag#17 uas_eh_abort_handler 0 uas-tag 1 inflight: CMD OUT

[ 126.480476] sd 1:0:0:0: [sdb] tag#17 CDB: opcode=0x2a 2a 00 00 f6 30 00 00 04 00 00

[ 162.321748] sd 1:0:0:0: [sdb] tag#5 uas_eh_abort_handler 0 uas-tag 2 inflight: CMD

[ 162.321758] sd 1:0:0:0: [sdb] tag#5 CDB: opcode=0x35 35 00 00 00 00 00 00 00 00 00

[ 162.338423] scsi host1: uas_eh_device_reset_handler start

[ 167.642039] xhci-hcd xhci-hcd.10.auto: Timeout while waiting for setup device command

[ 167.848680] usb 8-1: reset SuperSpeed Gen 1 USB device number 2 using xhci-hcd

[ 167.867319] scsi host1: uas_eh_device_reset_handler success

[ 198.162935] sd 1:0:0:0: [sdb] tag#0 uas_eh_abort_handler 0 uas-tag 1 inflight: CMD OUT

[ 198.162945] sd 1:0:0:0: [sdb] tag#0 CDB: opcode=0x2a 2a 00 00 f6 3c 00 00 04 00 00

This is not an UAS problem but underpowering. At least the board is stuck in I/O now and no benchmark is able to run. Well, since USB3 storage sucks anyway time to move on to something else…

The GPU I guess would be of interest on Linux is this a Rockchip BSP kernel with Rockchip blobs or is it absent of drivers currently?

Well, without adding coherent_pool=2M to extlinux.conf the board does not even boot when the USB3 attached SSDs are connected. This is with an mdraid0 (so zero practical relevance for future Rock 5B users):

root@rock-5b:/mnt/md0# cat /proc/mdstat

Personalities : [linear] [multipath] [raid0] [raid1] [raid6] [raid5] [raid4] [raid10]

md127 : active raid0 sdb[0] sda[1]

234307584 blocks super 1.2 512k chunks

unused devices: <none>Its only recent last year sometime just can not remember which revision it went in.

I can not even remember why I was reading about it, but prob due to being on 5.10

Purely mentioned that probably they should not be included in the Radxa image but definitely documented as tweaks and fixes.

Some consumption data…

I’m measuring with a NetIO PowerBox 4KF in a rather time consuming process that means ‘at the wall’ with charger included with averaged idle values over 4 minutes. I tested with 2 chargers so far:

- RPi 15W USB-C power brick

- Apple 94 USB-C charger

When checking with sensors tcpm_source_psy_4_0022-i2c-4-22 this looks like this with the RPi part

in0: 5.00 V (min = +5.00 V, max = +5.00 V)

curr1: 3.00 A (max = +3.00 A)

and like this with the Apple part:

in0: 9.00 V (min = +9.00 V, max = +9.00 V)

curr1: 3.00 A (max = +3.00 A)

27W could become a problem with lots of peripherals but @hipboi said the whole USB PD negotiation thing should be configurable via DT or maybe even at runtime.

Measured with RPi power brick and 2.5GbE network connection:

- No fan: idle consumption: 2390mW

- With fan: idle consumption: 3100mW

That means the little fan on my dev sample consumes 700mW that can be substracted from numbers (given Radxa will provide a quality metal case that dissipates heat out of the enclosure so no fan needed)

Now with the Apple Charger:

- fan / 2.5GbE: idle consumption: 3270mW

- fan and only GbE: idle consumption: 2960mW

The Apple charger obviously needs a little more juice for itself (+ ~150mW compared to the RPi power brick) and powering the 2.5GbE PHY vs. only Gigabit Ethernet speeds results in 300mW consumption difference.

-

echo default >/sys/module/pcie_aspm/parameters/policy--> idle consumption: 3380mW

Switching from powersave to default with PCIe powermanagement (needed to get advertised network speeds) adds ~100mW to idle consumption.

As such I propose the following changes in all of Radxa’s OS images (preferably ASAP but at least before review samples are sent out to those YT clowns like ExplainingComputers, ETA Prime and so on):

- setting

/sys/module/pcie_aspm/parameters/policyto default (w/o network RX performance is ruined) - appending

coherent_pool=2Mtoextlinux.conf(w/o most probably ‘UAS hassles’) - configuring

ondemandcpufreq governor withio_is_busyand friends (w/o storage performance sucks)

Would be great if you put it in the wiki.

In my tests on the USB cable, I measured 1.6W in idle with no fan, and 2.6W with it. At higher loads under 5V, the difference was a bit less but the fan wouldn’t spin as fast. When powered under 12V however, the fan speed remains stable but I can’t monitor the current. This seems consistent with your measurements, and you can count on 500-800mW for your PSU

Yeah, too bad I don’t have the Apple 30W charger around which was the most efficient charger I ever had in my hands.

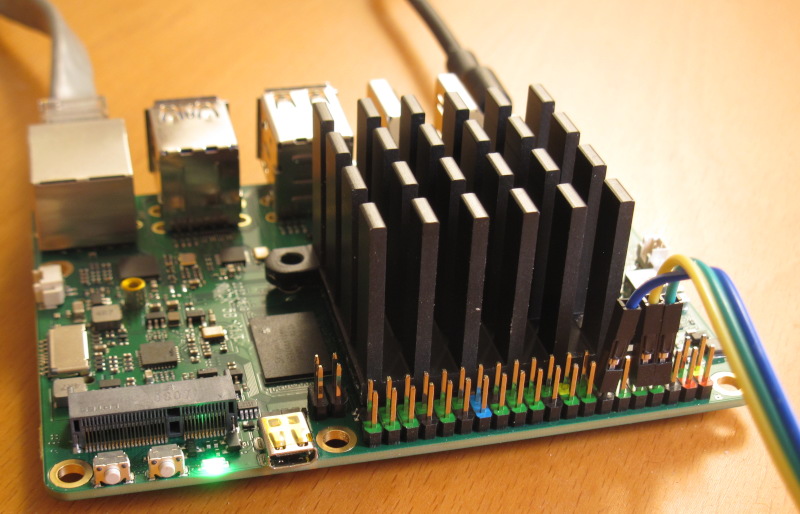

BTW: I removed the fansink in the meantime and tested without any cooling at an ambient temp of ~26°C (explanation of results/numbers):

| Cooling | Clockspeed | 7-zip | AES-256 (16 KB) | memcpy | memset | kH/s |

|---|---|---|---|---|---|---|

| with fansink | 2350/1830 | 16450 | 1337540 | 10830 | 29220 | 25.31 |

| w/o any cooling | 2010/1070 | 15290 | 1316480 | 10890 | 28430 | 22.14 |

This is just impressive since only slight performance decreases occured even with no cooling at all. So there’s definitive hope that Radxa will come up with a metal enclosure passively dissipating the heat away and still providing high sustained performance.

When running this demanding stuff for a longer time it looks like this:

Time big.LITTLE load %cpu %sys %usr %nice %io %irq Temp

11:17:29: 2208/ 408MHz 7.63 89% 0% 89% 0% 0% 0% 84.1°C

11:17:34: 2016/1416MHz 7.82 76% 1% 74% 0% 0% 0% 85.0°C

11:17:39: 2016/1416MHz 7.84 100% 0% 99% 0% 0% 0% 85.0°C

11:17:44: 2208/1416MHz 7.29 62% 0% 61% 0% 0% 0% 84.1°C

11:17:49: 2016/1416MHz 7.35 94% 1% 92% 0% 0% 0% 84.1°C

11:17:54: 2016/1416MHz 7.40 100% 0% 99% 0% 0% 0% 85.0°C

11:17:59: 2016/1200MHz 6.89 54% 1% 53% 0% 0% 0% 84.1°C

11:18:04: 1800/1416MHz 7.14 99% 2% 97% 0% 0% 0% 85.0°C

11:18:09: 2016/1416MHz 7.29 97% 2% 95% 0% 0% 0% 85.0°C

11:18:15: 2208/ 408MHz 7.34 96% 0% 96% 0% 0% 0% 84.1°C

11:18:20: 2016/1416MHz 7.56 75% 1% 74% 0% 0% 0% 85.0°C

11:18:25: 2016/1416MHz 7.59 95% 0% 94% 0% 0% 0% 85.0°C

11:18:30: 2016/1416MHz 7.30 67% 0% 67% 0% 0% 0% 83.2°C

11:18:35: 2016/1416MHz 7.36 96% 1% 95% 0% 0% 0% 85.0°C

11:18:40: 2208/ 408MHz 7.41 86% 0% 86% 0% 0% 0% 84.1°C

11:18:45: 2016/1416MHz 7.62 76% 2% 74% 0% 0% 0% 85.0°C

11:18:50: 2016/1416MHz 7.65 96% 1% 95% 0% 0% 0% 85.0°C

11:18:55: 2208/ 408MHz 7.68 78% 0% 78% 0% 0% 0% 84.1°C

11:19:00: 2016/1200MHz 7.78 76% 2% 74% 0% 0% 0% 84.1°C

11:19:05: 2016/1200MHz 7.88 99% 1% 97% 0% 0% 0% 84.1°C

11:19:10: 2016/1416MHz 7.89 95% 1% 94% 0% 0% 0% 85.0°C

11:19:15: 2208/ 408MHz 7.90 76% 0% 76% 0% 0% 0% 84.1°C

11:19:20: 2016/1416MHz 8.15 90% 1% 89% 0% 0% 0% 85.0°C

11:19:25: 2016/1416MHz 8.14 100% 0% 99% 0% 0% 0% 85.0°C

11:19:31: 2016/1200MHz 7.81 67% 1% 66% 0% 0% 0% 84.1°C

11:19:36: 1608/1416MHz 7.82 93% 0% 93% 0% 0% 0% 84.1°C

11:19:41: 2208/1608MHz 7.67 70% 0% 69% 0% 0% 0% 83.2°C

11:19:46: 2016/1200MHz 7.86 87% 1% 85% 0% 0% 0% 84.1°C

11:19:51: 2016/1416MHz 7.87 96% 0% 95% 0% 0% 0% 85.0°C

11:19:56: 2208/1608MHz 7.56 59% 0% 59% 0% 0% 0% 82.2°C

11:20:01: 2016/1200MHz 7.68 94% 2% 91% 0% 0% 0% 84.1°C

11:20:06: 2208/1200MHz 7.86 99% 1% 97% 0% 0% 0% 84.1°C

11:20:11: 2016/1416MHz 7.87 96% 1% 94% 0% 0% 0% 85.0°C

11:20:16: 2016/1416MHz 7.56 72% 0% 71% 0% 0% 0% 85.0°C

11:20:21: 2016/1416MHz 7.84 97% 0% 96% 0% 0% 0% 85.0°C

11:20:26: 2208/ 408MHz 7.85 84% 0% 84% 0% 0% 0% 84.1°C

11:20:31: 2016/1416MHz 7.94 81% 1% 80% 0% 0% 0% 85.0°C

11:20:36: 2016/1416MHz 7.95 100% 0% 99% 0% 0% 0% 85.0°C

11:20:41: 2016/1416MHz 7.63 62% 1% 61% 0% 0% 0% 85.0°C

11:20:46: 2016/1416MHz 7.66 93% 1% 91% 0% 0% 0% 84.1°C

11:20:51: 2208/ 408MHz 7.69 93% 0% 93% 0% 0% 0% 84.1°C

11:20:57: 2016/1200MHz 7.39 61% 1% 59% 0% 0% 0% 84.1°C

11:21:02: 2016/1008MHz 7.68 99% 1% 97% 0% 0% 0% 85.0°C

11:21:07: 2016/1416MHz 7.79 92% 2% 89% 0% 0% 0% 85.0°C

11:21:12: 2208/ 408MHz 7.81 93% 0% 92% 0% 0% 0% 84.1°C

11:21:17: 2016/1200MHz 7.50 77% 1% 75% 0% 0% 0% 84.1°C

11:21:22: 2016/1416MHz 7.54 98% 0% 97% 0% 0% 0% 85.0°C

11:21:27: 2016/1416MHz 7.58 67% 0% 67% 0% 0% 0% 85.0°C

11:21:32: 2016/1416MHz 7.61 96% 0% 95% 0% 0% 0% 85.0°C

11:21:37: 2208/ 408MHz 7.64 83% 0% 83% 0% 0% 0% 84.1°C

11:21:42: 2016/1200MHz 7.35 79% 1% 77% 0% 0% 0% 84.1°C

11:21:47: 2016/1416MHz 7.40 96% 0% 95% 0% 0% 0% 85.0°C

11:21:52: 2208/ 408MHz 7.45 74% 0% 74% 0% 0% 0% 84.1°C

11:21:58: 1800/1200MHz 6.93 79% 2% 77% 0% 0% 0% 85.0°C

11:22:03: 1800/1200MHz 7.10 99% 1% 97% 0% 0% 0% 85.0°C

11:22:08: 2016/1416MHz 7.41 94% 2% 92% 0% 0% 0% 85.0°C

11:22:13: 2016/1416MHz 7.46 72% 0% 72% 0% 0% 0% 84.1°C

11:22:18: 2016/1416MHz 7.66 97% 0% 96% 0% 0% 0% 85.0°C

11:22:23: 2208/ 408MHz 7.69 95% 0% 95% 0% 0% 0% 84.1°C

11:22:28: 1800/1416MHz 7.39 70% 1% 69% 0% 0% 0% 85.0°C

11:22:33: 1800/1416MHz 7.44 100% 0% 99% 0% 0% 0% 84.1°C

11:22:38: 2016/1416MHz 7.99 65% 0% 65% 0% 0% 0% 84.1°C

11:22:43: 2016/1416MHz 7.99 91% 1% 89% 0% 0% 0% 84.1°C

11:22:48: 2016/1416MHz 7.99 100% 0% 99% 0% 0% 0% 85.0°C

11:22:53: 2016/1200MHz 8.23 53% 0% 52% 0% 0% 0% 84.1°C

11:22:58: 2016/1200MHz 8.38 99% 1% 97% 0% 0% 0% 84.1°C

11:23:03: 2208/1608MHz 7.87 93% 1% 91% 0% 0% 0% 83.2°C

11:23:08: 2016/1416MHz 7.88 95% 0% 94% 0% 0% 0% 85.0°C

11:23:13: 2016/1416MHz 8.05 72% 0% 71% 0% 0% 0% 85.0°C

11:23:18: 2016/1416MHz 8.04 96% 0% 95% 0% 0% 0% 85.0°C

11:23:24: 2208/ 408MHz 7.72 80% 0% 80% 0% 0% 0% 84.1°C

11:23:29: 1800/1608MHz 7.98 84% 1% 82% 0% 0% 0% 85.0°C

11:23:34: 1800/1416MHz 7.98 100% 0% 99% 0% 0% 0% 84.1°C

11:23:39: 2016/1200MHz 7.98 65% 1% 63% 0% 0% 0% 84.1°C

11:23:44: 1800/1416MHz 8.15 97% 1% 95% 0% 0% 0% 84.1°C

Or in other words: thermal treshold is 85°C and throttling works. But we need to keep in mind that RK3588 is a lot more than just 8 ARM CPU cores. If GPU, I/O, NPU and so on are fully loaded the SoC needs a lot more thermal headroom.

Ok, we will fix this for the MP version.

Along with the heatsink skewness I hope?)

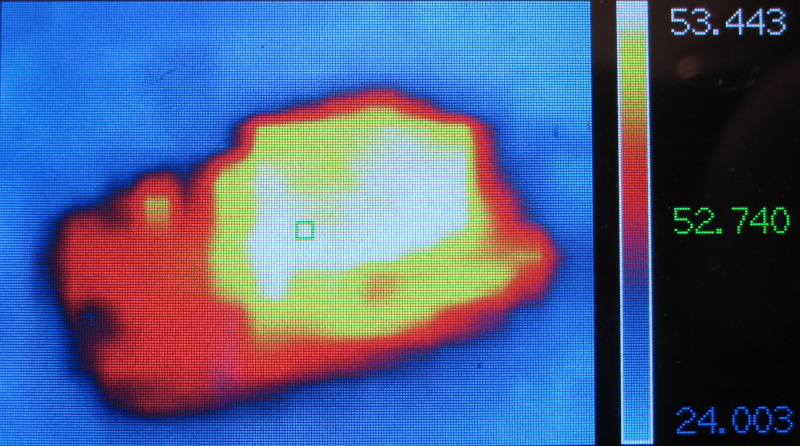

Yes I noticed as well that it’s extremely efficient. Last evening I replaced the fan+heatsink with a larger heatsink attached using thermal tape, a solution that I used with success on the much hotter RK3288. I’ve ran stress-ng for 20 minutes, the SoC temperature reached 76°C stable after 10-12 minutes, for about 53 on the heat sink, which is very reasonable:

At least that shows some good stability on the power path and the temperature.

Also Ifound a bit of info on this PVTM thing that gives me a varying top frequency at boot, it’s a concept I never heard of which tries to estimate the “quality” of the silicon based on a free-running clock, the temperature and the voltage, to estimate the max frequency that will be supported. Mine has a random frequency at boot because the measure oscillates between 1742 and 1744, the latter being the next offset

I find the concept interesting, except that it should then not remove the OPP from the list but just condition them by voltage/temp as well. I.e. if you manage to cool your board or to slightly overvolt it (or both), no reason to deal with the constraint. Anyway I think that just fiddling with the DTB to adjust the threshold will do the job, so for now I don’t really care. And possibly that we’ll be able to enable them when boost will be enabled, that’s something to check as well.

Last point (I’m grouping all my comments due to the limit of 3/day), I tried to run a network test at 2.5GbE but it ended up being at 1G because the other NIC was an intel X540AT2, and that one only supports 1 or 10G but not 2.5… I ordered a 1/2.5/5/10G one to run more tests. However during this time frame I could notice that queues were totally uneven on Rx (a single CPU was used), so there’s possibly room for improvement using ethtool. Something else to keep on the check list!

Yes, yesterday I read chapter 17 in RK3588 TRM-Part2 (other readers beware: ~3700 pages PDF 56 MB in size).

The reason to operate the board today w/o fansink was also to check whether ‘cold boot’ with ‘hot board’ results in different values but I’m still at

cpu cpu0: pvtm=1528

cpu cpu0: pvtm-volt-sel=5

cpu cpu4: pvtm=1784

cpu cpu4: pvtm-volt-sel=7

cpu cpu6: pvtm=1782

cpu cpu6: pvtm-volt-sel=7

which is almost exactly the same (except cpu4) than yesterday with fansink an an idle temp of 30.5°C when booting:

[ 3.117399] cpu cpu0: pvtm=1528

[ 3.117491] cpu cpu0: pvtm-volt-sel=5

[ 3.124529] cpu cpu4: pvtm=1785

[ 3.128495] cpu cpu4: pvtm-volt-sel=7

[ 3.136234] cpu cpu6: pvtm=1782

[ 3.140173] cpu cpu6: pvtm-volt-sel=7

As for the network tunables I’m waiting for your insights

Currently searching a fast enough NVMe SSD…

Nope:

root@rock-5b:/home/rock# dpkg -l | grep -i mali

ii gpg 2.2.19-3ubuntu2 arm64 GNU Privacy Guard -- minimalist public key operations

ii libmnl0:arm64 1.0.4-2 arm64 minimalistic Netlink communication library

In case you want me to look up other things please feel free to ask!