I think the opp table is sort of bogus a bit like the amlogic where its the internal mcu blob that is really at work.

ROCK 5B Debug Party Invitation

The temp you referred to was cearly labeled ‘single A76 core running 7-ZIP benchmark’, multi-threaded results were posted already yesterday.

The fansink on my board fits really tight, there’s an annoying fan spinning at maximum rpm and I operate my board upright standing to let convection jump in. And as you can see from the consumption measurements RK3588 is a different beast than the SoCs we were used to deal with in the past!

BTW: I really don’t get all this babbling polluting this thread. These are dev samples and Radxa guys looked into drawers and put something on that works. The final product will be shipped with a different heatsink anyway…

I don’t remember the measure but they were in that range as well. The CPU is barely warm when the fan spins. The CPU doesn’t consume much, which also explains why even with an uneven surface it still cools well.

Quite frankly, I’m not worried about this part at all. When you see the number of boards sold for which there are no holes at all, and which rely on you gluing the heatsink on top of the CPU, we already have more options there so let’s not turn this limitation into a big problem. It seems that there are little more options to place all components on that board anyway, so the CPU could hardly be more centered. Maybe one option could be to try to slightly rotate the heat sink by placing the holes differently but that’s not easy. I really think that just using a rubber pad of the same height at one extremity will completely solve the problem.

Stick on would do as that is another option with the temps your posting, did you find any other fans to stick on the real fan header. I have a few here but still waiting for delivery.

Its prob that thermal pad that has made things worse willy.

I am frankly uncomfortable asking

but can you swing the returns

cat /sys/kernel/debug/regulator/regulator_summary

cat /sys/kernel/debug/pinctrl/ * /gpio-ranges

cat /sys/kernel/debug/pinctrl/ * /pinmux-pins

cat /sys/kernel/debug/pinctrl/ * /pinmux-functions

cat /sys/kernel/debug/pinctrl/ * /pingroups

cat /sys/kernel/debug/pinctrl/ * /pinconf-pins

cat /sys/kernel/debug/pinctrl/ * /pinconf-groups

ou similar

I’m having a 16GB model with the following silkscreened on it: ‘2022.05.19 ROCK 5B V1.3’ (looks exactly like the pictures in 1st post of this thread except of RK3588 which is a different revision):

As a quick reference dmesg (only interesting stuff I found is around the hyst lines) and DTS from me.

root@rock-5b:/home/rock# ( cat /sys/kernel/debug/regulator/regulator_summary ; grep -R . /sys/kernel/debug/pinctrl/ ) | curl -s -F 'f:1=<-' ix.io

http://ix.io/41F1

BTW: grep -R . /sys/kernel/debug/ freezes the board

Could you please confirm if PCI express indeed works at 3.0 version speeds?

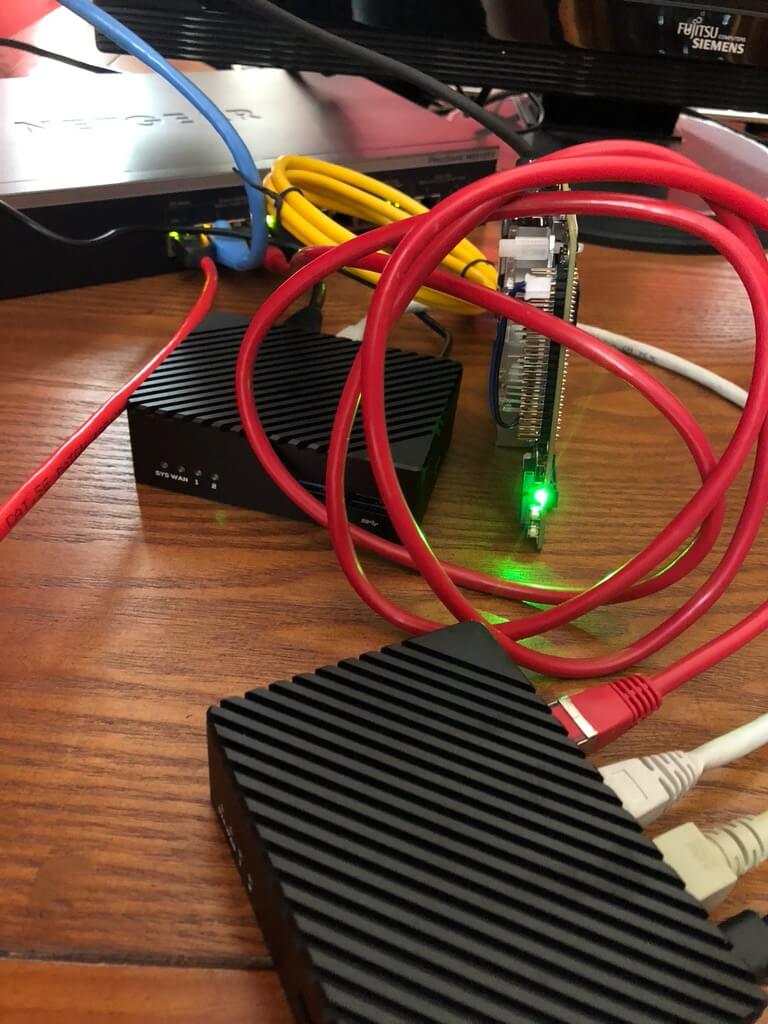

Those devices with black metal case must be nanopi’s R5S or R6S.

R5S as mentioned above when comparing performance/consumption between RK3568 and RK3588.

I’m about to setup a nice 2.5GbE ‘LAN party’ with those 3 ARM boards and 2 MacBooks with RTL8156 dongles

BTW: never heard of R6S but there’s a little room for speculation about a soon to be announced NanoPi R3S (also based on RK3568).

OK, @willy (who can’t post here since registered just yesterday) pointed me in this direction:

R6S based on RK3588S

Sadly, the contained kernel 4.4 is already EOL:

[2022-07-05T18:44:44+0200] ueberall_l@desktop01:/tmp% wget "https://github.com/radxa/debos-radxa/releases/download/20220701-0727/rockpi-s-ubuntu-focal-server-arm64-20220701-0851-gpt.img.xz"

[…]

[2022-07-05T18:45:47+0200] ueberall_l@desktop01:/tmp% xz -dv rockpi-s-ubuntu-focal-server-arm64-20220701-0851-gpt.img.xz

rockpi-s-ubuntu-focal-server-arm64-20220701-0851-gpt.img.xz (1/1)

100 % 140,6 MiB / 906,0 MiB = 0,155 92 MiB/s 0:09

[2022-07-05T18:45:59+0200] ueberall_l@desktop01:/tmp% fdisk -l ./rockpi-s-ubuntu-focal-server-arm64-20220701-0851-gpt.img

Disk ./rockpi-s-ubuntu-focal-server-arm64-20220701-0851-gpt.img: 905,101 MiB, 950000128 bytes,

1855469 sectors

Units: sectors of 1 * 512 = 512 bytes

Sector size (logical/physical): 512 bytes / 512 bytes

I/O size (minimum/optimal): 512 bytes / 512 bytes

Disklabel type: gpt

Disk identifier: 34D6A485-96AB-4EAE-B781-BEA9FCBC918D

Device Start End Sectors Size Type

./rockpi-s-ubuntu-focal-server-arm64-20220701-0851-gpt.img1 32768 262143 229376 112M EFI System

./rockpi-s-ubuntu-focal-server-arm64-20220701-0851-gpt.img2 262144 1855435 1593292 778M Linux filesystem

[2022-07-05T18:46:07+0200] ueberall_l@desktop01:/tmp% mkdir ./rockpi-s-ubuntu-focal-server-arm64-20220701-0851-gpt

[2022-07-05T18:46:46+0200] ueberall_l@desktop01:/tmp% sudo mount -t auto -o loop,offset=$((262144*512)) ./rockpi-s-ubuntu-focal-server-arm64-20220701-0851-gpt.img ./rockpi-s-ubuntu-focal-server-arm64-20220701-0851-gpt

[sudo] password for ueberall_l:

[…]

[2022-07-05T18:49:16+0200] ueberall_l@desktop01:/tmp% dpkg-query -W --admindir=./rockpi-s-ubuntu-focal-server-arm64-20220701-0851-gpt/var/lib/dpkg >./rockpi-s-ubuntu-focal-server-arm64-20220701-0851-gpt.dpkg-query.txt

[2022-07-05T18:50:11+0200] ueberall_l@desktop01:/tmp% grep ^linux- ./rockpi-s-ubuntu-focal-server-arm64-20220701-0851-gpt.dpkg-query.txt

linux-4.4-rock-pi-s-latest:arm64 4.4.143-69-rockchip

linux-base 4.5ubuntu3

linux-firmware-image-4.4.143-69-rockchip-g8ccef796d27d:arm64 4.4.143-69-rockchip

linux-headers-4.4.143-69-rockchip-g8ccef796d27d:arm64 4.4.143-69-rockchip

linux-image-4.4.143-69-rockchip-g8ccef796d27d:arm64 4.4.143-69-rockchip

[2022-07-05T18:51:35+0200] ueberall_l@desktop01:/tmp%

Given that competing SBC have patches for the 5.15 kernel (and newer ones) which also slowly find their way upstream (if they have not been included already), I’m afraid that this is simply way too old. The system might run, but kernel 4.4 was published 6.5 years ago and isn’t tested against current software packages/applications (because it’s EOL) unless you manage to pay for it.

Please at least consider including a kernel that is not already EOL or won’t be EOL by the time the hardware will be available (IMHO, even kernel 4.9 will not really fit the bill, it’ll require kernel 4.14 or newer).

EDIT: As pointed out below by tkaiser, I downloaded the wrong image, so the rant is misplaced and does not apply to the ROCK5B (only to other/older models).

But we’re talking about Rock 5B / RK3588 here. And deal with a forward ported version 5.10 from Rockchip (not to be confused with official 5.10 LTS kernel which is a different thing).

everyone does what they want, however when you get targets for free you can test it with the ammunition that come with it.

My bad! Using the correct image, you’re presented with

[2022-07-05T19:47:05+0200] ueberall_l@desktop01:/tmp% grep ^linux- ./rock-5b-ubuntu-focal-server-arm64-20220701-0826-gpt.dpkg-query.txt

linux-base 4.5ubuntu3

linux-headers-5.10.66-11-rockchip-geee2b32138fd:arm64 5.10.66-11-rockchip

linux-image-5.10.66-11-rockchip-geee2b32138fd:arm64 5.10.66-11-rockchip

[2022-07-05T19:47:32+0200] ueberall_l@desktop01:/tmp%

That’s an acceptable starting point. If you can trust the version number, that is. (EDIT: tkaiser is simply too fast to reply  )

)

Well, the information about which kernel version we’re dealing with Rock 5B is here multiple times in this thread (part of sbc-bench output for example). But it’s important to understand how this kernel got to this version since as already said: it’s forward ported from 2.6.32 on and not the official 5.10 LTS kernel.

1st quick check for networking. It’s a RTL8125BG connected via PCIe Gen2 x1:

LnkCap: Port #0, Speed 5GT/s, Width x1, ASPM L0s L1, Exit Latency L0s unlimited, L1 <64us

ClockPM+ Surprise- LLActRep- BwNot- ASPMOptComp+

LnkCtl: ASPM L1 Enabled; RCB 64 bytes Disabled- CommClk+

ExtSynch- ClockPM+ AutWidDis- BWInt- AutBWInt-

LnkSta: Speed 5GT/s (ok), Width x1 (ok)

TrErr- Train- SlotClk+ DLActive- BWMgmt- ABWMgmt-

In TX direction everything is fine even when letting iperf3 run on a little core:

root@rock-5b:/home/rock# taskset -c 5 iperf3 -c 192.168.83.63

Connecting to host 192.168.83.63, port 5201

[ 5] local 192.168.83.113 port 47964 connected to 192.168.83.63 port 5201

[ ID] Interval Transfer Bitrate Retr Cwnd

[ 5] 0.00-1.00 sec 272 MBytes 2.28 Gbits/sec 41 573 KBytes

[ 5] 1.00-2.00 sec 281 MBytes 2.36 Gbits/sec 0 701 KBytes

[ 5] 2.00-3.00 sec 281 MBytes 2.36 Gbits/sec 0 728 KBytes

[ 5] 3.00-4.00 sec 280 MBytes 2.35 Gbits/sec 0 735 KBytes

[ 5] 4.00-5.00 sec 281 MBytes 2.36 Gbits/sec 0 738 KBytes

[ 5] 5.00-6.00 sec 280 MBytes 2.35 Gbits/sec 0 741 KBytes

[ 5] 6.00-7.00 sec 280 MBytes 2.35 Gbits/sec 0 745 KBytes

[ 5] 7.00-8.00 sec 281 MBytes 2.36 Gbits/sec 0 748 KBytes

[ 5] 8.00-9.00 sec 280 MBytes 2.35 Gbits/sec 0 749 KBytes

[ 5] 9.00-10.00 sec 281 MBytes 2.36 Gbits/sec 0 749 KBytes

- - - - - - - - - - - - - - - - - - - - - - - - -

[ ID] Interval Transfer Bitrate Retr

[ 5] 0.00-10.00 sec 2.73 GBytes 2.35 Gbits/sec 41 sender

[ 5] 0.00-10.00 sec 2.73 GBytes 2.35 Gbits/sec receiver

iperf Done.

root@rock-5b:/home/rock# taskset -c 1 iperf3 -c 192.168.83.63

Connecting to host 192.168.83.63, port 5201

[ 5] local 192.168.83.113 port 47968 connected to 192.168.83.63 port 5201

[ ID] Interval Transfer Bitrate Retr Cwnd

[ 5] 0.00-1.00 sec 227 MBytes 1.90 Gbits/sec 244 479 KBytes

[ 5] 1.00-2.00 sec 282 MBytes 2.37 Gbits/sec 0 689 KBytes

[ 5] 2.00-3.00 sec 280 MBytes 2.35 Gbits/sec 0 730 KBytes

[ 5] 3.00-4.00 sec 280 MBytes 2.35 Gbits/sec 0 744 KBytes

[ 5] 4.00-5.00 sec 281 MBytes 2.36 Gbits/sec 0 749 KBytes

[ 5] 5.00-6.00 sec 280 MBytes 2.35 Gbits/sec 0 755 KBytes

[ 5] 6.00-7.00 sec 281 MBytes 2.36 Gbits/sec 0 759 KBytes

[ 5] 7.00-8.00 sec 280 MBytes 2.35 Gbits/sec 0 762 KBytes

[ 5] 8.00-9.00 sec 281 MBytes 2.36 Gbits/sec 0 765 KBytes

[ 5] 9.00-10.00 sec 280 MBytes 2.35 Gbits/sec 0 768 KBytes

- - - - - - - - - - - - - - - - - - - - - - - - -

[ ID] Interval Transfer Bitrate Retr

[ 5] 0.00-10.00 sec 2.69 GBytes 2.31 Gbits/sec 244 sender

[ 5] 0.00-10.00 sec 2.69 GBytes 2.31 Gbits/sec receiver

iperf Done.

But in RX direction performance sucks with defaults. IRQs 133 and 149 are on little cores. And even when moving them to big cores I’m stuck at 510 Mbits/sec. Also the weird cpufreq behaviour (even with performance cpufreq governor clocking the cores as low as 408 MHz) doesn’t help.

This is some stuff for deeper investigations. But let’s check PCIe powermanagement first:

root@rock-5b:/home/rock# cat /sys/module/pcie_aspm/parameters/policy

default performance [powersave] powersupersave

Well, that doesn’t look right. Let’s switch to default et voilà:

Even on a little core 2.5GbE link saturated:

root@rock-5b:/home/rock# taskset -c 1 iperf3 -R -c 192.168.83.63

Connecting to host 192.168.83.63, port 5201

Reverse mode, remote host 192.168.83.63 is sending

[ 5] local 192.168.83.113 port 47980 connected to 192.168.83.63 port 5201

[ ID] Interval Transfer Bitrate

[ 5] 0.00-1.00 sec 272 MBytes 2.29 Gbits/sec

[ 5] 1.00-2.00 sec 280 MBytes 2.35 Gbits/sec

[ 5] 2.00-3.00 sec 281 MBytes 2.35 Gbits/sec

[ 5] 3.00-4.00 sec 281 MBytes 2.35 Gbits/sec

[ 5] 4.00-5.00 sec 280 MBytes 2.35 Gbits/sec

[ 5] 5.00-6.00 sec 279 MBytes 2.34 Gbits/sec

[ 5] 6.00-7.00 sec 280 MBytes 2.35 Gbits/sec

[ 5] 7.00-8.00 sec 280 MBytes 2.35 Gbits/sec

[ 5] 8.00-9.00 sec 281 MBytes 2.35 Gbits/sec

[ 5] 9.00-10.00 sec 280 MBytes 2.35 Gbits/sec

- - - - - - - - - - - - - - - - - - - - - - - - -

[ ID] Interval Transfer Bitrate

[ 5] 0.00-10.00 sec 2.73 GBytes 2.35 Gbits/sec sender

[ 5] 0.00-10.00 sec 2.73 GBytes 2.34 Gbits/sec receiver

iperf Done.Some experiments with USB. Connected two SSDs in UAS capable USB3 enclosures:

root@rock-5b:/home/rock# lsusb -t

/: Bus 08.Port 1: Dev 1, Class=root_hub, Driver=xhci-hcd/1p, 5000M

|__ Port 1: Dev 2, If 0, Class=Mass Storage, Driver=uas, 5000M

/: Bus 06.Port 1: Dev 1, Class=root_hub, Driver=xhci-hcd/1p, 5000M

|__ Port 1: Dev 2, If 0, Class=Mass Storage, Driver=uas, 5000M

root@rock-5b:/home/rock# lsusb

Bus 006 Device 002: ID 174c:55aa ASMedia Technology Inc. Name: ASM1051E SATA 6Gb/s bridge, ASM1053E SATA 6Gb/s bridge, ASM1153 SATA 3Gb/s bridge, ASM1153E SATA 6Gb/s bridge

Bus 008 Device 002: ID 152d:3562 JMicron Technology Corp. / JMicron USA Technology Corp. JMS567 SATA 6Gb/s bridge

The try to create an mdraid0 (to test for bottlenecks with the USB3 ports) seems to fail: dmesg output

I know, most readers will now recommend to ‘blacklist UAS’ but the symptoms are maybe just related to some underpowering. Don’t know yet. At least there’s room for improvements (appending coherent_pool=2M to extlinux.conf)

I think in newer kernels coherent_pool auto expands as needed and that setting is merely a start point but shouldn’t hurt though. Anyway I remember from somewhere it autoexpands but can not remember which version that was introduced.

Well, adjusting this (and providing one of the two USB3 enclosures with own power) fixed the issues.

But it seems every tweak I added over the years to Armbian (like coherent pool size or IRQ affinity and ondemand governor tweaks) needs to be applied to Radxa’s OS images too.

Testing the raid0 with a simple iozone -e -I -a -s 1000M -r 1024k -r 16384k -i 0 -i 1:

kB reclen write rewrite read reread

1024000 1024 258830 270261 341979 344249

1024000 16384 270022 271088 667757 679947

That was running on a little core and is total crap. Now on a big core (by prefixing the iozone call with taskset -c 7):

kB reclen write rewrite read reread

1024000 1024 475382 457597 374120 374863

1024000 16384 777913 771802 736511 734899

Still crappy but when checking with sbc-bench -m it’s obvious that CPU clockspeeds don’t ramp up quickly and to the max:

Time big.LITTLE load %cpu %sys %usr %nice %io %irq Temp

19:43:03: 408/1008MHz 0.35 3% 1% 0% 0% 1% 0% 32.4°C

19:43:08: 408/1008MHz 0.33 0% 0% 0% 0% 0% 0% 32.4°C

19:43:13: 816/1200MHz 0.38 13% 3% 0% 0% 8% 1% 32.4°C

19:43:18: 1416/1200MHz 0.43 13% 2% 0% 0% 10% 0% 32.4°C

19:43:23: 600/1008MHz 0.56 14% 4% 0% 0% 7% 1% 33.3°C

19:43:28: 408/1008MHz 0.51 1% 0% 0% 0% 0% 0% 32.4°C

One echo 1 > /sys/devices/system/cpu/cpufreq/policy6/ondemand/io_is_busy later we’re at

kB reclen write rewrite read reread

1024000 1024 604081 603160 505333 506815

1024000 16384 791865 789173 775160 778706

Still not perfect (less than 800 MB/sec) but at least the big core immediately switches to highest clockspeed:

Time big.LITTLE load %cpu %sys %usr %nice %io %irq Temp

19:50:44: 408/1008MHz 0.00 2% 0% 0% 0% 0% 0% 32.4°C

19:50:49: 2400/1800MHz 0.00 5% 1% 0% 0% 3% 0% 32.4°C

19:50:54: 2400/1800MHz 0.08 12% 1% 0% 0% 11% 0% 32.4°C

19:50:59: 2400/1800MHz 0.15 13% 2% 0% 0% 9% 0% 34.2°C

19:51:04: 408/1008MHz 0.14 2% 0% 0% 0% 1% 0% 32.4°C

But 790 MB/s are close to what can be achieved with a RAID0 over two USB3 SuperSpeed buses since each bus maxes out at slightly above 400 MB/s.

Moral of the story: if you’re shipping with ondemand cpufreq governor you need to take care about io_is_busy and friends.

After adding the following to /etc/rc.local even on a little core storage performance is ok-ish:

echo default >/sys/module/pcie_aspm/parameters/policy

for cpufreqpolicy in 0 4 6 ; do

echo 1 > /sys/devices/system/cpu/cpufreq/policy${cpufreqpolicy}/ondemand/io_is_busy

echo 25 > /sys/devices/system/cpu/cpufreq/policy${cpufreqpolicy}/ondemand/up_threshold

echo 10 > /sys/devices/system/cpu/cpufreq/policy${cpufreqpolicy}/ondemand/sampling_down_factor

echo 200000 > /sys/devices/system/cpu/cpufreq/policy${cpufreqpolicy}/ondemand/sampling_rate

done

This is the result tested with taskset -c 1 iozone -e -I -a -s 1000M -r 1024k -r 16384k -i 0 -i 1:

kB reclen write rewrite read reread

1024000 1024 524857 526483 458726 459194

1024000 16384 780470 774856 733638 734297