I noticed a few days ago that there’s an updated ROCK 5 ITX+ board available and immediately wondered what they changed as I have one of the original and can think of a few things that I wish were different about it. Turns out, the biggest change is removing the 4 SATA ports and adding another m.2 gen3 x2 socket (which can be 110mm) - and hyping it up as a way to get 6 SATA ports (via 2 hexa SATA adapters. Considering all of the trouble so far with keeping the SATA ports working between kernel releases, I don’t know if this is a good move or not. But it did make me decide to write down what I’d personally like out of a future ITX board based on the rk35xx platform…

I’d like to use this board to collapse a few functions down to one and save some power - in particular my home router, NAS, and media servers as well as some containers for simple services.

What’s missing now to do this? The biggest thing I would prefer is 10gbe via an SFP+ cage. My suggestion would be using 2 lanes of gen3 to accomplish this. I don’t have a preference of what controller to integrate but a Mellanox ConnectX-3 might make sense as its well supported, PCIe gen3, and available as a single port. Or, just plumb 2 of these lanes to a PCIe slot (x2 electrical but open-ended mechanically to support longer cards) and let the user pick.

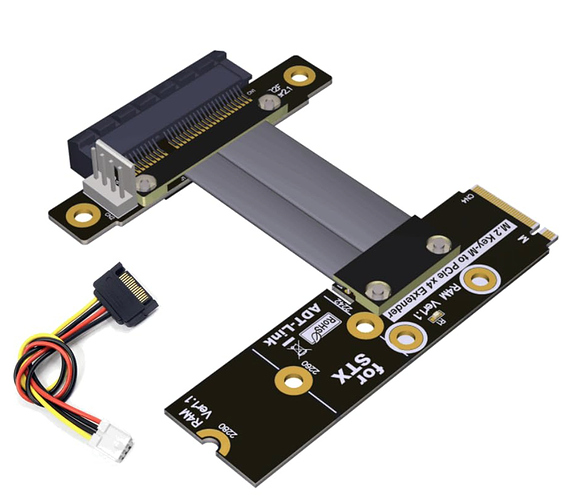

With a 10g port plugged into my switch and some VLANs, I’d drop one or both 2.5gbe interfaces and expose some of the native 1g interfaces that are available via the SOC for use as WAN ports. This would free up some of the gen2 PCIe lanes which could then be used for storage. I’d then pull the SATA controller over from the gen3 lanes to gen2 and deal with the perf hit. Or again, expose 2 lanes of gen2 as another open ended PCIe slot so the user can choose what to do with it.

Leaving 2 lanes of gen3 for an m.2 slot would be fine, but depending upon how many PCIe slots were exposed this could be yet another. Or expose all 4 gen3 lanes as one slot.

Is there a way to expose the debug header as an RS232 interface at a slower speed? If so, do that for a console port. Bonus points if it’s RJ45 rather than DB9, but make it something that I can plug into my terminal server. Failing that, the datasheet shows there’s a bunch of TTL UARTs available that could be plumbed out to plugs. I get that we wouldn’t see anything prior to the kernel entrypoint, but I could still run a getty on it or attach a modem (my dreamcast has to get online somehow!).

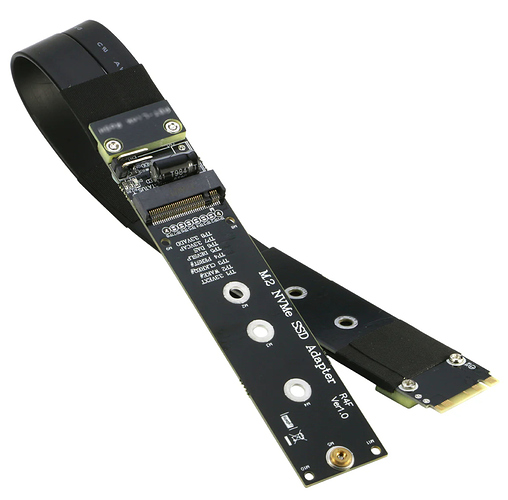

The m.2 gen2 x1 slot that’s there now for wireless? I’d much rather have that for another (slower) SSD for the root filesystem. This thing is gonna be very well connected via cables and I have dedicated access points so no need for wireless and if it does USB is an option.

Find a way to fit the 40 pin GPIO block in there.

Do people actually use the roobi installer on the emmc and leave it there? I dropped that thing fast and put my own install onto it. Increase the size of the emmc to at least 16G as 8GB isn’t much in 2025 for a general purpose Linux install even without a windowing environment.

Yeah, that’d be a tight board.