Step 1. Install the latest system

You can get system images from https://github.com/radxa/debos-radxa/releases

Let’s check the kernel version:

rock@rock-5b:~$ sudo su

root@rock-5b:~# uname -r

5.10.66-14-rockchip-g05483899648a

Step 2. Get the prebuilt RKNN2 YOLOv5 demo

Download the prebuilt Yolov5 demo from https://github.com/radxa/rknpu2

root@rock-5b:~# curl https://github.com/radxa/rknpu2/releases/download/20220512/rknn_yolov5_demo_linux_20220512.tar.gz

Step 3. Run the RKNN2 YOLOv5 demo

root@rock-5b:~# tar -xvf rknn_yolov5_demo_linux_20220512.tar.gz

root@rock-5b:~# cd rknn_yolov5_demo_linux

root@rock-5b:~# export LD_LIBRARY_PATH=./lib

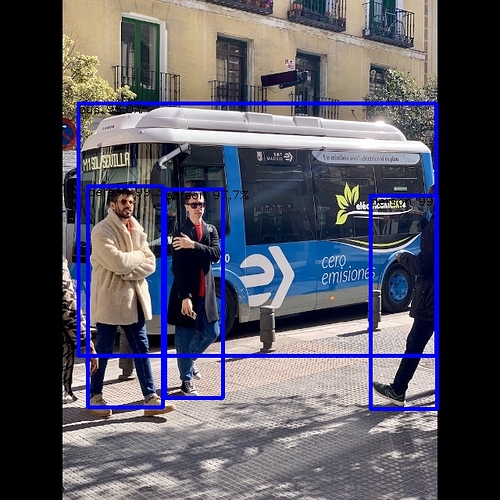

root@rock-5b:~# ./rknn_yolov5_demo ./model/RK3588/yolov5s-640-640.rknn ./model/bus.jpg

post process config: box_conf_threshold = 0.50, nms_threshold = 0.60

Read ./model/bus.jpg ...

img width = 640, img height = 640

Loading mode...

sdk version: 1.2.0 (1867aec5b@2022-01-14T15:16:40) driver version: 0.7.2

model input num: 1, output num: 3

index=0, name=images, n_dims=4, dims=[1, 640, 640, 3], n_elems=1228800, size=4915200,

fmt=NHWC, type=FP32, qnt_type=AFFINE, zp=-128, scale=0.003922

index=0, name=output, n_dims=5, dims=[1, 3, 85, 80], n_elems=1632000, size=1632000,

fmt=NCHW, type=INT8, qnt_type=AFFINE, zp=77, scale=0.080445

index=1, name=371, n_dims=5, dims=[1, 3, 85, 40], n_elems=408000, size=408000, fmt=NCHW,

type=INT8, qnt_type=AFFINE, zp=56, scale=0.080794

index=2, name=390, n_dims=5, dims=[1, 3, 85, 20], n_elems=102000, size=102000, fmt=NCHW,

type=INT8, qnt_type=AFFINE, zp=69, scale=0.081305

model is NHWC input fmt

model input height=640, width=640, channel=3

rga_api version 1.7.0_[1]

once run use 25.492000 ms

loadLabelName ./model/coco_80_labels_list.txt

person @ (474 250 559 523) 0.996784

person @ (112 238 208 521) 0.992814

bus @ (100 132 558 455) 0.980211

person @ (211 242 285 509) 0.976798

loop count = 10 , average run 33.581600 ms

Check the out.jpg