Why would you want to reorder or renumber the CPUs? Is it for technical reasons?

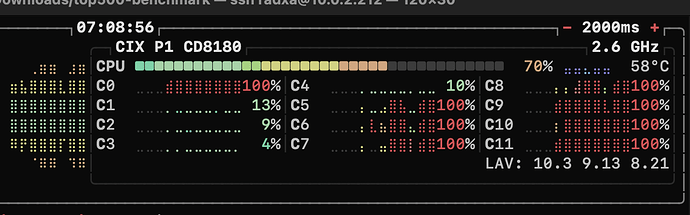

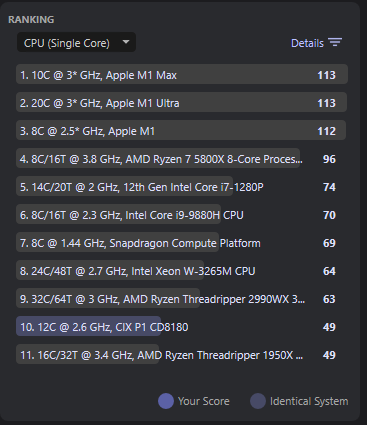

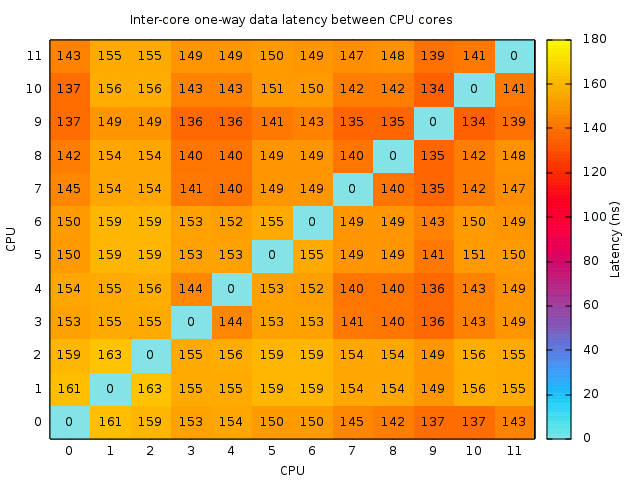

To make it less of a pain to figure and assemble clusters. Usually on big-little (and generally on many-cores) you use “taskset” with everything to assign tasks to the preferred cluster. Same for IRQs which are often assigned using simple bit rotation written in shell in a “for” loop. Having CPUs in random order makes it super complicated to perform manual bindings. Here’s what we currently have:

- cpu0: core 2 of cluster 2

- cpu1: core 0 of cluster 0

- cpu2: core 1 of cluster 0

- cpu3: core 2 of cluster 0

- cpu4: core 3 of cluster 0

- cpu5: core 0 of cluster 1

- cpu6: core 1 of cluster 1

- cpu7: core 2 of cluster 1

- cpu8: core 3 of cluster 1

- cpu9: core 0 of cluster 2

- cpu10: core 1 of cluster 2

- cpu11: core 3 of cluster 2

For manual handling it’s a real pain. I’m currently binding processes using “taskset -c 0,9-11” and “taskset -c 5-8”, none of which is usual nor natural. Even looking at “top” is hard to follow in real time.

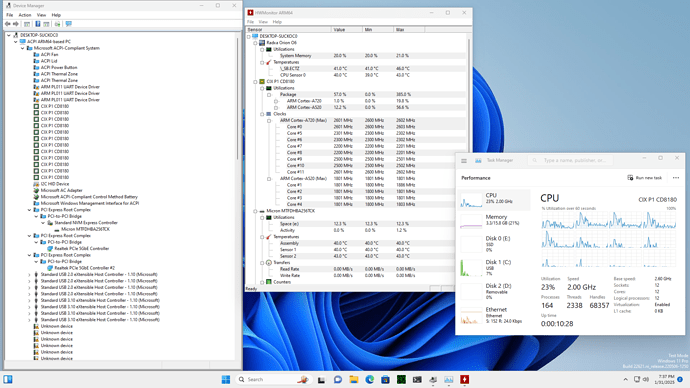

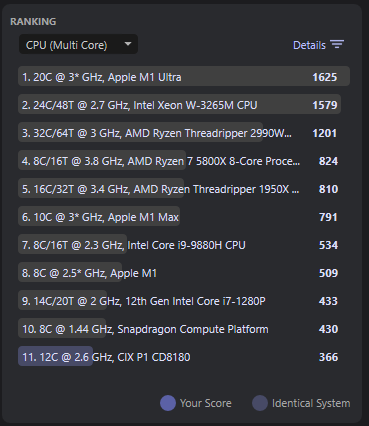

Ideally, clusters would be arranged from biggest to smallest so that we’d have the first 4 CPUs being the biggest core, the next 4 ones being the middle cores, and the final 4 CPU the A520 (like is drawn on the SoC diagram). That’s what would make CPU bindings the most agnostic/portable by using the maximum performance (e.g. using only 4 cores only requires you to use 0-3, and using 8 means 0-7, still quite performant). But the reverse approach (0-3=A520, 4-7=middle, 8-11=big) also works, it just requires to remember to use 8-11 for 4 fastest cores and 4-11 for 8.

I can understand why the BIOS boots from one of the biggest CPUs to minimize boot time during decompression (though I’m pretty much convinced the difference is invisible), and it turns out that this chosen CPU becomes CPU0. Thus I think it’s fine if CPUs are arranged from biggest to smallest.

I tried to modify the DTS to have: 10,11,8,9,4,5,6,7,0,1,2,3, but I noticed that while swapping one core with another of the same cluster is OK (e.g. I can swap 4 and 5), any inversion of clusters causes a reboot (e.g. swap just 4 and 3 crashes).

Hoping this helps!