I am using frigate NVR currently on the Rock 5B with a google coral. Is there a way to switch it to use the ROCK NPU?

IMX415 + NPU demo on ROCK 5B

Please, check how to convert it here: https://github.com/rockchip-linux/rknn-toolkit2

I used radxa and rockchip (latest) model already converted.

Once you can convert it, or someone else, please let me know so i can try to run it and see how it compares.

This is fantastic. What OS did you use to run NPU detection? Thanks

The first screenshot is from Ubuntu 22.04 and has no Desktop (CLI) 1920x1080, and the other with Graphical Desktop is Debian 11.5 (X11) which is lost when my eMMC died. I plan to redo everything with NVME when it arrives which can take some time. I think OS is irrelevant, you need a Lean and Mean distro with HW acceleration. Maybe with Wayland is possible to get better results.

@flyingRich

Did you convert the frigate tensorflow lite model?

I think it is this one:

Running the demo with SDL3.

Here is a pre-built package for Debian/Ubuntu:

Note that the API is changing, what works today may not work tomorrow. Porting sdl2 to sdl3 is getting harder.

I want to start playing with the NPU. Have you posted your code to github?

My plan is to get an RTSP stream from an IP camera, run it through the rock 5 NPU and output to another h264 stream on the local network.

I’m lacking documentation on how to do the first 2 steps

No, I haven’t. I just recreated Debian 11 and tried to restore the same environment lost with the two failed eMMC. Still waiting for the m2 sata adapter and a way to convert the models (if that’s possible), i think i have to learn how to do it myself.

Regarding your RTSP project, i think the only way to achieve that is with gstreamer. GStreamer has a tone of documentation but zero practical examples.

Maybe this could be a start:

so far what I see is that by default, from repos there is no hardware support for gstreamer from radxa mirrors.

gstreamer1.0-rockchip1 : Depends: librockchip-mpp1 but it is not installable

To solve that, one could use rockchip mpp for gstreamer release which is from 2017 and won’t support h265 or any new hardware. That means the newest develop source must be used for compilation which will come with many more problems.

To build it from source, one must spend countless hours fixing every single problem that compilation will throw up. (I spent 2 hours so far, immediately after your reply).

So, rockchip specs are nice and all but currently there is no software support and the only official github repo has 154 issues and 3 week old commits which don’t help with anything.

I guess we need to wait a year or two to actually be able to use the hardware as advertised.

There is a little gem here:

There you find all pre-built deb packages if you don’t want to spend time building/compiling and fixing all stuff.

Start with a minimal image that does not have any broken dependencies and then install the required packages. I think rkr1 was meant for kernel 5.10.66 ( ~ 72 - patches) and rkr4 for 5.10.110. But rkr1 will work on 5.10.110 with some minor features missing.

Care must be taken by the order you install the packages. And don’t make the mistake to install all at once and then reboot, install one package, reboot and check, repeat…

Tip:

- librga

- libmpp

- xserver (X11)

- etc…

yeah, like i said nothing that’s installable and usable out of the box.

thanks for the link though

Can you explain what this is for?

Not sure i understand the question, i will try to answer and be brief.

Those are deb built by Rockchip members to get most of the board working features, like HW video acceleration, 3D, 2D, and encoder, and decoder working as expected on their BSP/SDK.

If you don’t want to spend hours, weeks, or months figuring out how to build it yourselves you just install the required deb to make gstreamer work. In order not to have dependencies errors like the above, you start with a clean image and then install what you need.

You can use a ready-to-use image if you don’t want those hassles. At some point, you will need to get your hands dirty to fix things that broke when you update to the latest kernel version.

Just want to leave this here might be of help

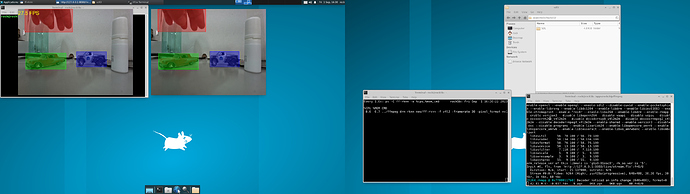

i have extended the demo to be able to play the streaming inference (kind of) in real time from a remote server (rtmp).

As the video demo shows, the objects detected are pushed to the rtmp server and can be played with ffplay, rtmp or http protocols.

Screencast made with FFmpeg, 3840x1080, Xcfe and video demo is available here:

https://mega.nz/file/pHJWXAaK#-jRlbgicsAoNbTOlMDkA73vm272Jc_vSHSSsc_wPYgg

Eventually, if times permits i will show how it was made here:

For Frigate and rk3588 there’s a repo with a branch specific to OrangePi5 and rk3588.

After compiling the Docker image

make arm64

you should config your frigate detectors.

This branch comes with two rk3588-specific frigate detectors: “armnn” which is the ARM-optimized code and rknn which is the rk3588 onboard NPU. armnn works for basic detection and one 4k camera, while the rknn detector is a hit-and-miss right now.

Thank you for sharing this interesting data. What type of neural network are your using for inference? YOLOV8/variants or something different?

yolov5s (rockchip)

@avaf can you share the build steps for creating sdl2-cam, I’m trying to enhance SDL2 features to support more resolution streams.

Hi @Jagan , not sure i understood the question.

Will you work on the imx415 kernel driver to support more resolutions?

The SDL2 i used for the demo is the distro version, the old version 2.0.20 with some hacks to work with libmali (i think panfrost does not need it) and to render it on screen (opengles2 or kms/drm), it renders as texture.

I called it sdl2-cam cause it does not use opencv to render it, only to grab the frames.

No 2FA for me on github… (yet)… But i can point to the code it was based on if needed.