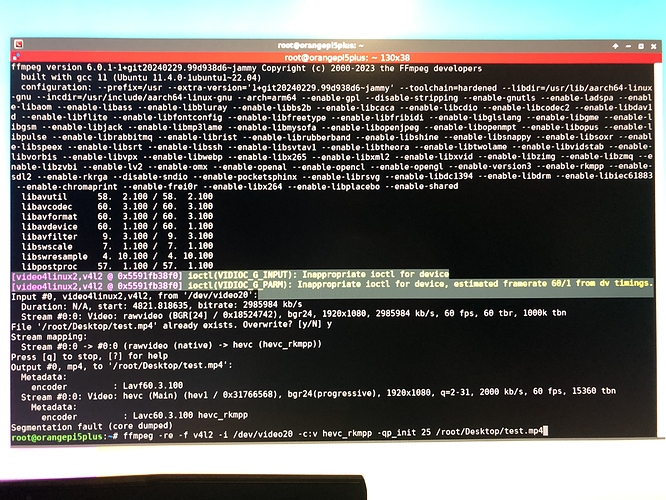

Hi all, I was playing with ffmpeg and gst-launch for the past few days and I’m currently pretty sure that my testcase of using the radxa 4k camera to stream 4k to whip endpoint is currently not possible. (Missing plugins for both and I have no idea how to merge RK-HW libs with available WHIP repos and vise-versa. The best result I currently get is with this command:

gst-launch-1.0 v4l2src num-buffers=512 device=/dev/video11 io-mode=4 ! videoconvert ! video/x-raw, format=NV12, width=1920, height=1080, framerate=30/1 ! tee name=t ! queue ! mpph264enc ! queue ! h264parse ! mux. flvmux streamable=true name=mux ! rtmpsink http://

When I try with ffmpeg it works too but I get much worse encoding quality:

ffmpeg -i /dev/video11 -c:v h264_rkmpp -video_size 1920x1080 -b:v 6M -maxrate 6M \

-bufsize 1M -profile:v high -g:v 120 -f flv rtmp://

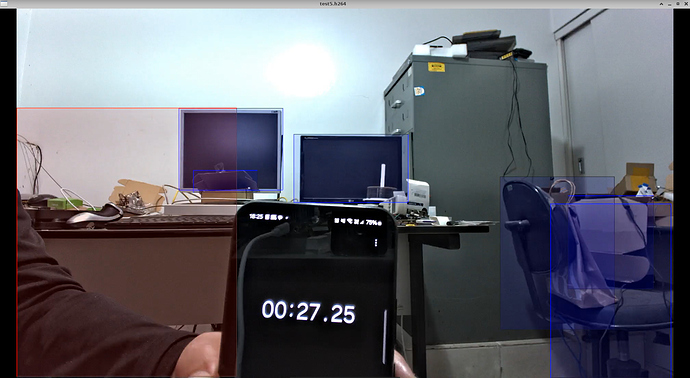

Also it seems like the sensor is not in the middle of the camera, when I point the camera at me it’s like I just see the top left(or right) quarter of the sensor…

Any hints? Thank you in advance.

I’ll give it a shot and report back! Thank you!!

I’ll give it a shot and report back! Thank you!!