The HAT powerup seems quite unstable. Only 2 times did I get it to work with both drives (after a reboot). On a few occasions I saw 1 drive. On most occasions, none show up, but the controllers do. Here is the dmesg output when none of the drives show up but the PCI controllers do:

[ 5.833047] vcc3v3_pcie20: 3300 mV, enabled

[ 5.833149] reg-fixed-voltage vcc3v3-pcie20: Looking up vin-supply from device tree

[ 5.833156] vcc3v3_pcie20: supplied by vcc5v0_sys

[ 5.833241] reg-fixed-voltage vcc3v3-pcie20: vcc3v3_pcie20 supplying 3300000uV

[ 6.742913] PCI: CLS 0 bytes, default 64

[ 7.215345] rk-pcie fe4f0000.pcie: invalid prsnt-gpios property in node

[ 7.215360] rk-pcie fe4f0000.pcie: Looking up vpcie3v3-supply from device tree

[ 7.216161] rk-pcie fe4f0000.pcie: max MSI vector is 8

[ 7.216173] rk-pcie fe4f0000.pcie: Missing *config* reg space

[ 7.216239] rk-pcie fe4f0000.pcie: host bridge /pcie@fe4f0000 ranges:

[ 7.216278] rk-pcie fe4f0000.pcie: err 0x00fc000000..0x00fc0fffff -> 0x00fc000000

[ 7.216304] rk-pcie fe4f0000.pcie: IO 0x00fc100000..0x00fc1fffff -> 0x00fc100000

[ 7.216321] rk-pcie fe4f0000.pcie: MEM 0x00fc200000..0x00fdffffff -> 0x00fc200000

[ 7.216332] rk-pcie fe4f0000.pcie: MEM 0x0100000000..0x013fffffff -> 0x0100000000

[ 7.216373] rk-pcie fe4f0000.pcie: Missing *config* reg space

[ 7.216456] rk-pcie fe4f0000.pcie: invalid resource

[ 7.275009] ehci-pci: EHCI PCI platform driver

[ 7.423390] rk-pcie fe4f0000.pcie: PCIe Linking... LTSSM is 0x3

[ 7.505630] rk-pcie fe4f0000.pcie: PCIe Link up, LTSSM is 0x130011

[ 7.505804] rk-pcie fe4f0000.pcie: PCI host bridge to bus 0000:00

[ 7.505815] pci_bus 0000:00: root bus resource [bus 00-ff]

[ 7.505821] pci_bus 0000:00: root bus resource [??? 0xfc000000-0xfc0fffff flags 0x0]

[ 7.505827] pci_bus 0000:00: root bus resource [io 0x0000-0xfffff] (bus address [0xfc100000-0xfc1fffff])

[ 7.505832] pci_bus 0000:00: root bus resource [mem 0xfc200000-0xfdffffff]

[ 7.505837] pci_bus 0000:00: root bus resource [mem 0x100000000-0x13fffffff pref]

[ 7.505872] pci 0000:00:00.0: [1d87:3528] type 01 class 0x060400

[ 7.505948] pci 0000:00:00.0: supports D1 D2

[ 7.505953] pci 0000:00:00.0: PME# supported from D0 D1 D3hot

[ 7.511483] pci 0000:01:00.0: [1b21:1182] type 01 class 0x060400

[ 7.511915] pci 0000:01:00.0: enabling Extended Tags

[ 7.514839] pci 0000:01:00.0: PME# supported from D0 D3hot D3cold

[ 7.527463] pci 0000:01:00.0: bridge configuration invalid ([bus 00-00]), reconfiguring

[ 7.529009] pci 0000:02:03.0: [1b21:1182] type 01 class 0x060400

[ 7.529569] pci 0000:02:03.0: enabling Extended Tags

[ 7.530226] pci 0000:02:03.0: PME# supported from D0 D3hot D3cold

[ 7.544451] pci 0000:02:07.0: [1b21:1182] type 01 class 0x060400

[ 7.545045] pci 0000:02:07.0: enabling Extended Tags

[ 7.547831] pci 0000:02:07.0: PME# supported from D0 D3hot D3cold

[ 7.553067] pci 0000:02:03.0: bridge configuration invalid ([bus 00-00]), reconfiguring

[ 7.553110] pci 0000:02:07.0: bridge configuration invalid ([bus 00-00]), reconfiguring

[ 7.558639] pci_bus 0000:03: busn_res: [bus 03-ff] end is updated to 03

[ 7.564187] pci_bus 0000:04: busn_res: [bus 04-ff] end is updated to 04

[ 7.564218] pci_bus 0000:02: busn_res: [bus 02-ff] end is updated to 04

[ 7.564261] pci 0000:02:03.0: PCI bridge to [bus 03]

[ 7.564392] pci 0000:02:07.0: PCI bridge to [bus 04]

[ 7.564500] pci 0000:01:00.0: PCI bridge to [bus 02-04]

[ 7.564633] pci 0000:00:00.0: PCI bridge to [bus 01-ff]

[ 7.565917] pcieport 0000:00:00.0: PME: Signaling with IRQ 81

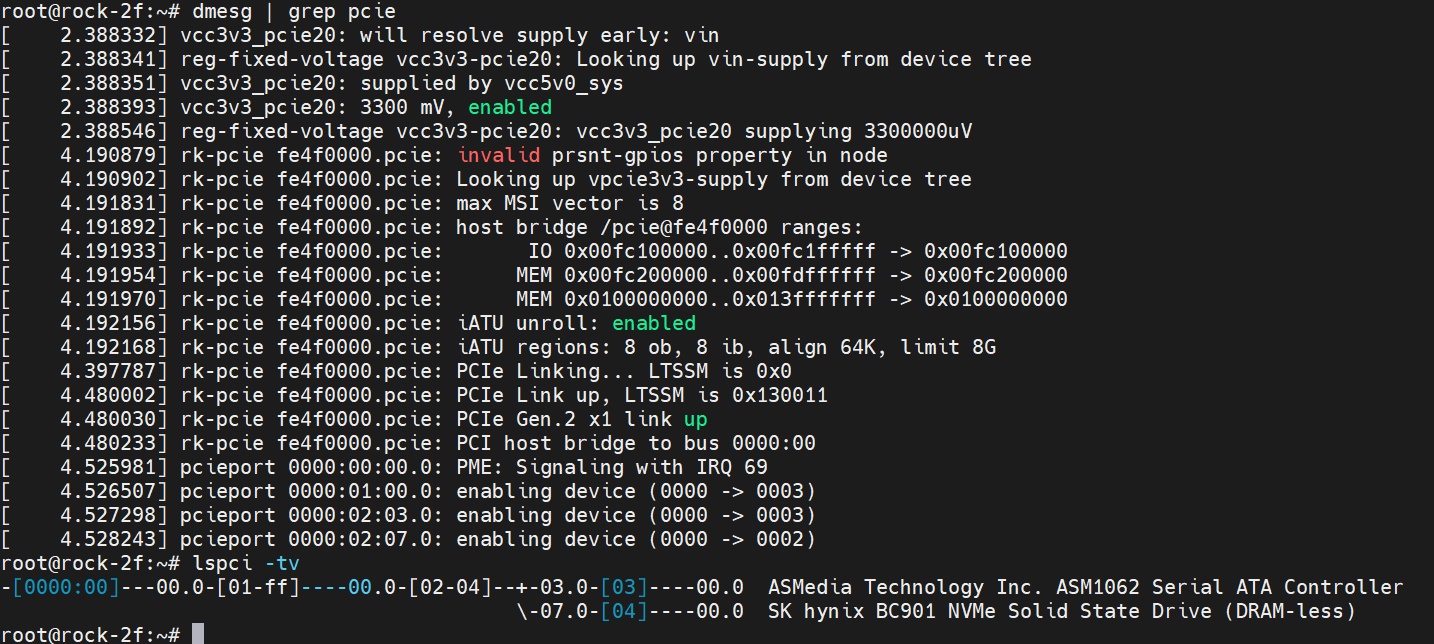

lspci:

00:00.0 PCI bridge: Fuzhou Rockchip Electronics Co., Ltd Device 3528 (rev 01)

01:00.0 PCI bridge: ASMedia Technology Inc. Device 1182

02:03.0 PCI bridge: ASMedia Technology Inc. Device 1182

02:07.0 PCI bridge: ASMedia Technology Inc. Device 1182

When it works, there should be two nvme drives below.

Check out first impressions videos about pi5 - many creators have done that

Check out first impressions videos about pi5 - many creators have done that

PM me if You need any other explanation

PM me if You need any other explanation